Hardware Security

Exploring Architectural Vulnerability of Deep Learning Architectures against Hardware Intrinsic (Lead- Hasan)

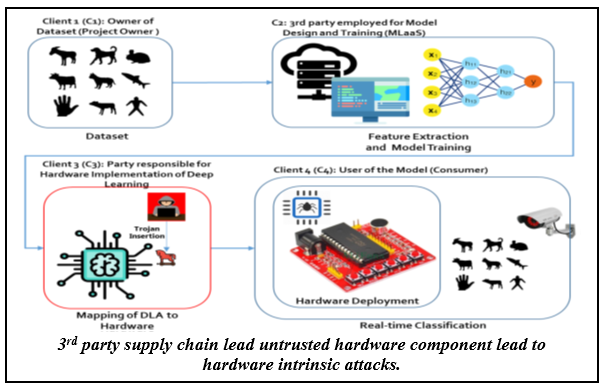

Deep learning architectures (DLA) have shown impressive performance in  computer vision, natural language processing, autonomous vehicles, smart manufacturing,

etc. Many DLA make use of cloud computing to achieve classification due to the high

computation and memory requirements. Privacy and latency concerns resulting from cloud

computing has inspired the deployment of DLA on embedded hardware accelerators. To

achieve short time-to-market and have access to global experts, state-of-the-art

techniques of DLA deployment on hardware accelerators are outsourced and often to

untrusted 3rd parties. This raises security concerns as hardware intrinsic attacks

can be inserted into such hardware accelerators for DLA. Existing hardware intrinsic

attacks highlighted in literature have few limitations, such as they require full

knowledge of DLA and don’t offer any qualitative means to make sure the triggering

will occur. This research investigates hardware intrinsic attacks that are both stealthier

and practical in terms of implementation, leveraging statistical properties of layer-by-layer

output of DLA. This research also focuses on potential threat models when DLA is distributed

in an Edge Intelligence environment for AIoT applications. Preliminary results show

that such attacks can be very stealthy and requires minimal resources. Moreover, this

research will propose defense mechanism against such vulnerabilities by employing

security checkpoints via a trusted network node acting as a defender.

computer vision, natural language processing, autonomous vehicles, smart manufacturing,

etc. Many DLA make use of cloud computing to achieve classification due to the high

computation and memory requirements. Privacy and latency concerns resulting from cloud

computing has inspired the deployment of DLA on embedded hardware accelerators. To

achieve short time-to-market and have access to global experts, state-of-the-art

techniques of DLA deployment on hardware accelerators are outsourced and often to

untrusted 3rd parties. This raises security concerns as hardware intrinsic attacks

can be inserted into such hardware accelerators for DLA. Existing hardware intrinsic

attacks highlighted in literature have few limitations, such as they require full

knowledge of DLA and don’t offer any qualitative means to make sure the triggering

will occur. This research investigates hardware intrinsic attacks that are both stealthier

and practical in terms of implementation, leveraging statistical properties of layer-by-layer

output of DLA. This research also focuses on potential threat models when DLA is distributed

in an Edge Intelligence environment for AIoT applications. Preliminary results show

that such attacks can be very stealthy and requires minimal resources. Moreover, this

research will propose defense mechanism against such vulnerabilities by employing

security checkpoints via a trusted network node acting as a defender.

Find us on Social Media

Contact Us

Cybersecurity Education, Research and Outreach Center

Office Hours: Monday–Friday, 8AM–4:30PM CDT

(931) 372-3519 | ceroc@tntech.edu

Street Address:

Cybersecurity Education, Research and

Outreach Center (CEROC)

Ashraf Islam Engineering Building (AIEB) 238

1021 Stadium Dr.

Cookeville, TN 38501

Mailing Address:

Tennessee Tech University

Cybersecurity Education, Research and

Outreach Center (CEROC)

Campus Box 5134

Cookeville, TN 38505